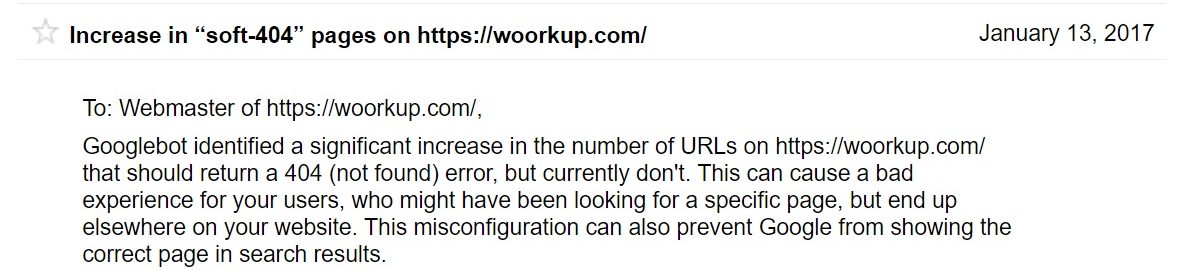

This last week I have been getting a lot of notifications from Google Search Console about a drastic increase in soft 404 errors. Usually, whenever you get a notification directly from Google there is something you need to fix. Generally you should never ignore these. Check out one way below on how to fix soft 404 errors in WordPress.

What are Soft 404 Errors?

You are probably all familiar with standard 404 errors, which means a page doesn’t exist. A soft 404 error occurs when a non-existent page displays a page not found message to anyone trying to access it, but fails to return a HTTP 404 status code. They can also occur when the non-existent page redirects users to an irrelevant page, such as the homepage, instead of returning a HTTP 404 status code. The important thing to remember here is that the content of a web page is entirely unrelated to the HTTP response returned by the server.

Google has a great analogy to explain soft 404 errors:

Just because a page displays a 404 File Not Found message doesn’t mean that it’s a 404 page. It’s like a giraffe wearing a name tag that says “dog.” Just because it says it’s a dog, doesn’t mean it’s actually a dog. Similarly, just because a page says 404, doesn’t mean it’s returning a 404.

Hallam Internet also has an in-depth article on the affect of soft 404 errors and rankings, which I recommend reading.

How to Fix Soft 404 Errors in WordPress

Below is the soft 404 warning I got in Google Search Console.

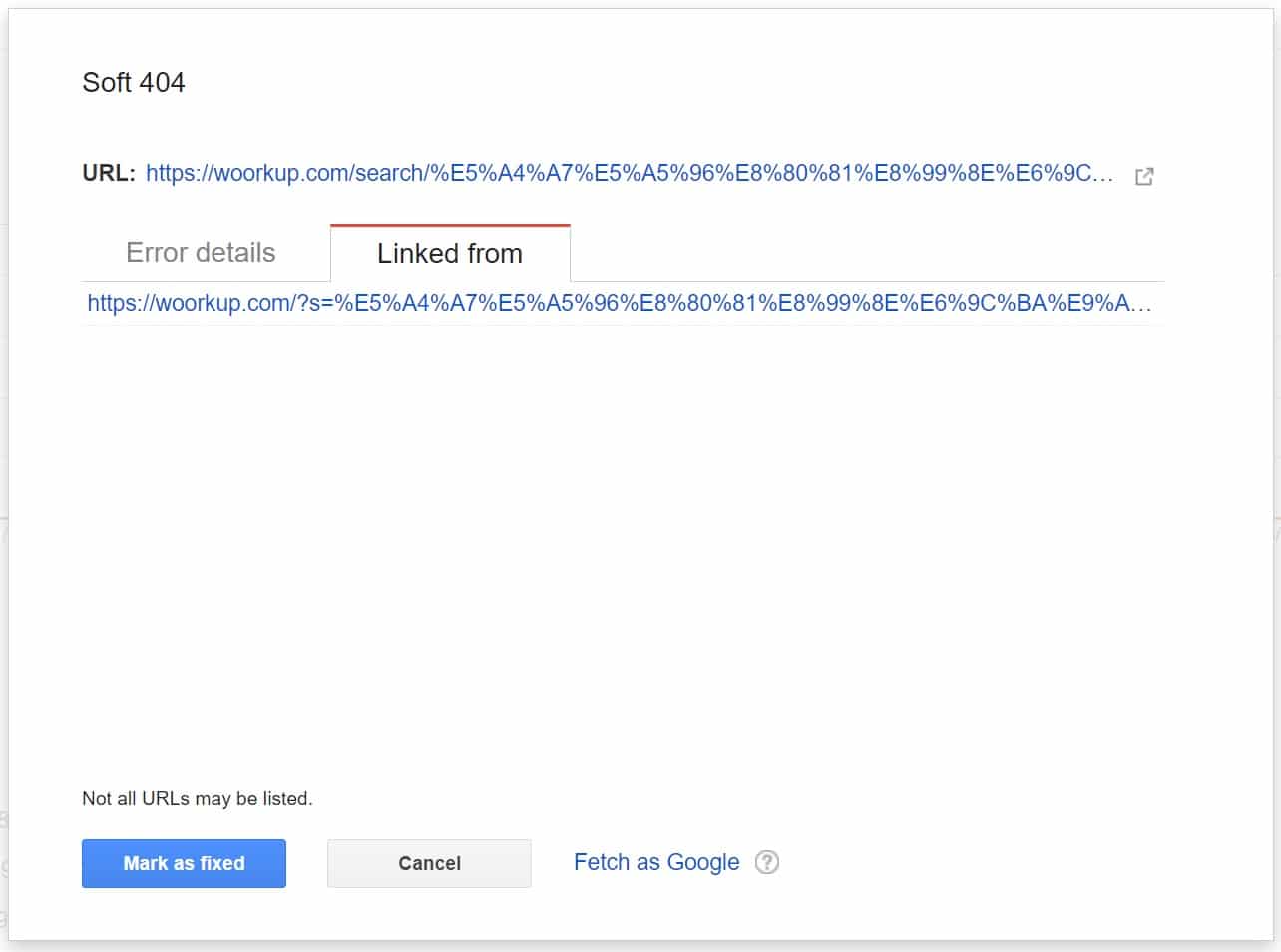

A bunch of them were being linked from a weird domain: 88Q82019309.com.

Step 1

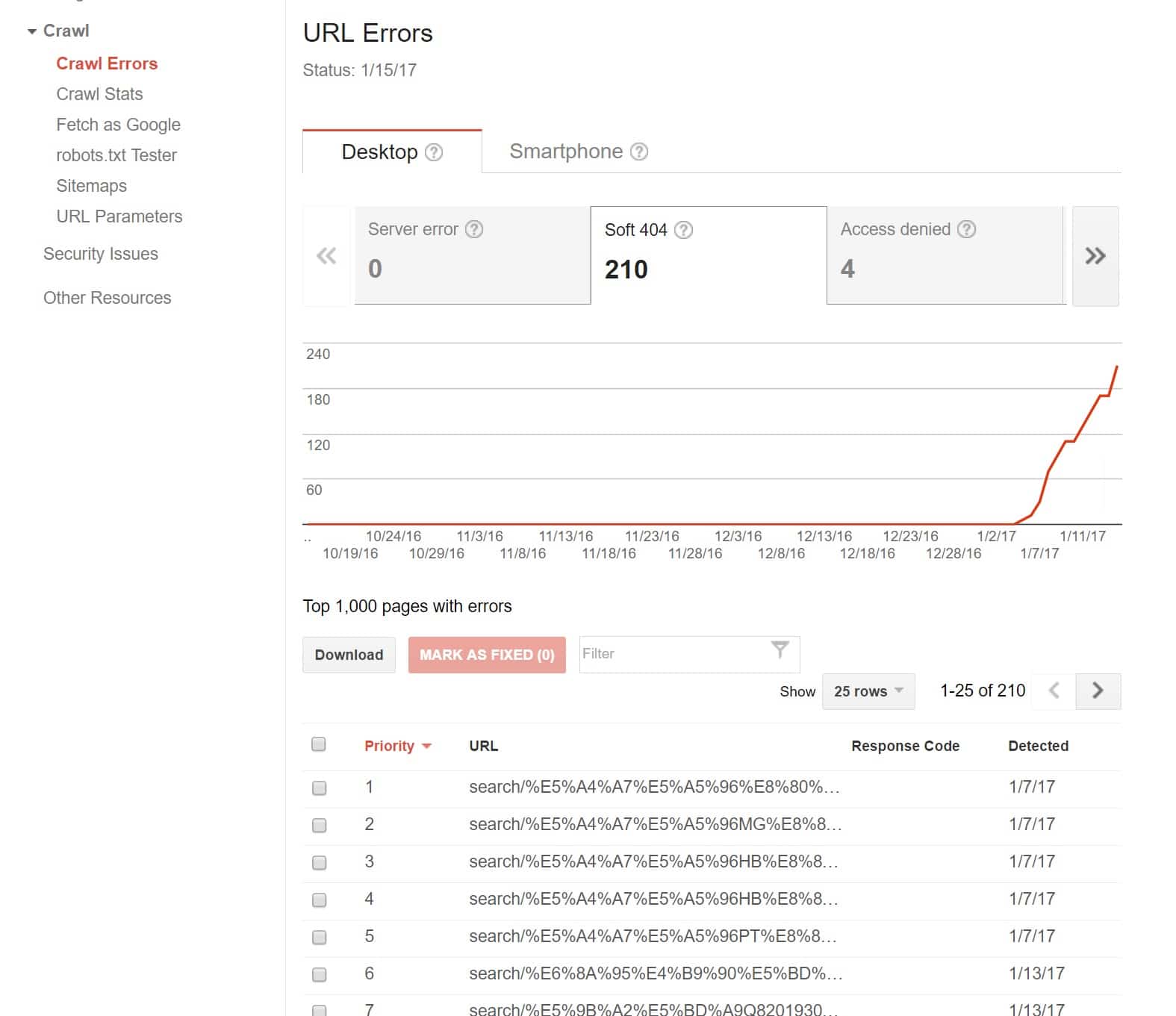

The first thing you will want to do is click into “Crawl Errors” in Google Search Console, and then into the “Soft 404” tab. As you can see below I just started getting a bunch of soft 404 errors all of the sudden.

Step 2

Click into one of the errors. You can see in my case they are coming from the “search” functionality on my WordPress site. Most likely this is due to a spammer of some sort. They are simply running query strings rapidly through it and then it generates soft 404 errors because obviously those pages don’t exist.

Step 3

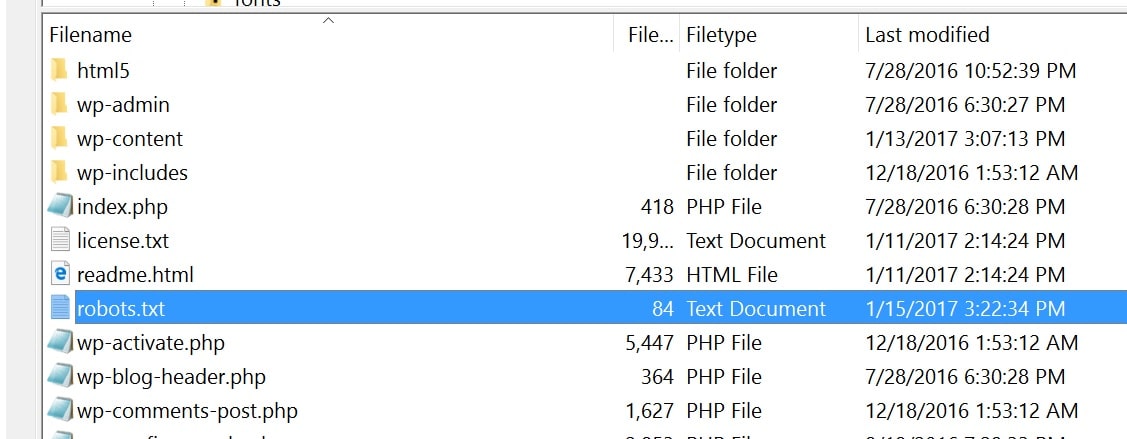

One way to prevent this is to simply disable the WordPress search URL from being crawled. This means modifying the robots.txt file on my WordPress site. The robots.txt file allows you to control how Google accesses and crawls your site. Typically this is located at the root of your site. You will need to download it via FTP, edit it, and re-upload it.

This was how my default robots.txt file looked:

User-agent: * Disallow: /wp-admin/ Allow: /wp-admin/admin-ajax.php

After editing it, it now looks like this:

User-agent: * Disallow: /wp-admin/ Disallow: /?s= Disallow: /search/ Allow: /wp-admin/admin-ajax.php

I am adding the Disallow: /?s= and Disallow: /search/ which will prevent Google from generating such pages. Be very careful when manipulating your robots.txt file as you could harm your indexing if you don’t do it correctly.

Step 4

After editing and re-uploading your robots.txt file you should clear out the warnings in Google Search Console. You can then wait a few days and ensure that no more come back. It is also important to note that this might not always fix them.

One thing you need to keep in mind is that disallows in the robots.txt will just disallow crawling, it will have less of an impact on actual indexing. If we have reason to believe that a URL which is disallowed from crawling is relevant, we may include it in our search results with whatever information we may have (if we’ve never crawled it, we may just include the URL — if we’ve crawled it in the past, we may include that information).

To prevent URLs like these from being indexed, I would recommend that you have the server 301 redirect to the appropriate canonical (and of course not link to the incorrect one). – John Mueller, Web Master Trends Analyst and Google (src: Builtvisible)

Summary

There are other scenarios that will cause soft 404 errors to show up in Google Search Console. But the example above shows you one way to combat them. If this tutorial on how to fix soft 404 errors in WordPress was helpful, let me know below.

This is great, just what I needed. How do you handle certain soft 404 errors that come from deep .JS files within your WP theme that are not really accessible?

Extremely helpful guide Brian! I’ve gotten similar soft 404 errors on my site too. Going to update my robot.txt file today.

Glad it was helpful Dominique. I just updated with another parameter /search/ which you might also need to add. I noticed this format started showing up after the /?s= stopped.

Thanks, Brian for the guide. I´ve also gotten a lot of soft 404 errors on my site lately. Going to try your solution. /Peter

Hopefully it helps Peter! All my 404 errors have finally gone away now.

Increasing organic traffic?

I’m confronting this issue for a while. Then I decided to switch to Google Custom Search and then turn off search functions. I also prevent Google from indexing s=? as well as /search/.

However, Google Webmaster Tools still notify me about new 404 soft errors.

What should I do now?

Hey Tony, are you sure they aren’t delayed messages in search console about 404 errors? It took about 2 weeks for things to settle down after doing the steps above.

I did the robots.txt thing and it worked for two months, then the old soft 404s started coming back, and now a bunch of new ones are appearing. After seeing the first new one I stopped blocking them in robots hoping they would turn into hard 404s but they have not. I am going to reblock, but it does not seem to truly solve the problem. Some knowledgeable people think it’s from a hack.

Interesting, are they hitting any different URLs or variations? I have not seen any 404 errors come back after adding the above rules. Will be hard to replicate what the issue might be.

Just want to update my case. I couldn’t resolve it and the only way is to disable Wordpress search function permanently. Then I implement Google Customize Search into my website to replace Wordpress search. So far so good :)

Interesting… some more bad news lol. I think Google Search is going away by the end of this year :( http://searchengineland.com/google-sunset-google-site-search-product-recommends-ad-supported-custom-search-engine-269834 But at least you have some time.

No. I mean this one:

https://www.google.com/cse/

Thanks for your reply. They are hitting each other. In Search Console it will say “linked from” then it will link to another of the mydomaindotcom/ChineseURLs. Or in some cases they link from outside Chinese sites that appear harmless. Last night I reblocked them in robots, checked “fixed,” and removed the search bar from my site. They have not come back, but I’m waiting. I’ve blocked and checked “fixed” before and they came back. Perhaps getting rid of the search bar helps stop them. Also installed a firewall the other day.

Hi tori,

I would recommend disabling Wordpress Search function and replace it with Google Customize Search. Something like this.

http://i.imgur.com/L4sW0jr.png

For sure, you will get rid of this annoying issue.

I looked but there are no updated plugins for Google Custom Search. The newest one is 11 mos. old. I know it can be done without a plugin, but it’s beyond my skills. I don’t get how to insert HTML into Wordpress–the instructions are too vague.

Good news, they are still gone as of tonight!! Hope they do not come back. I had Sucuri firewall installed. I think what did it though is removing the search bar.

Thanks for the tutorial and the easy workaround, Brian. I did as you described and hope the soft 404 errors will be gone. Although I doubt the solution is perfect: all Google Analytics onsite search results will be gone as well, will not they?

IMO it would be better to adapt the search.php a way that pages with search results (have_posts()) will be delivered with HTTP header 200 and pages with have_posts() empty should deliver HTTP header 404.

Would be interested in your opinion.

Hey Marco, Google Analytics is JavaScript that runs on your own site… this doesn’t block that traffic from showing up. I still have my analytics showing /?s% data, even with the above rules in place.

Wow. Will let you know about my experience in a couple of days ;) Cheers!